Identifying biomarkers of neurological and psychiatric clinical conditions from brain physiology has many challenges.

What is a Biomarker?

The general idea of a biomarker is a biological or physiological observation that indicates or predicts something clinically relevant. The most common biomarkers in use are body temperature and blood pressure which have been around over a hundred years now. Body temperature is most often an indication of an active underlying infection while high blood pressure is more an indication of risk of atherosclerotic cardiovascular disease. They are therefore very different kinds of ‘biomarkers’ which begs the question, what are the specific conditions that must be satisfied for something to be a biomarker.

This definition has evolved over time. In 1987 the National Research Council and National Academy of Sciences convened a Committee on Biological Markers that provided a definition of biomarkers as indicators of events in biological systems that could be of three types: either indicating exposure, effect or susceptibility. Blood pressure would fall under the latter. Subsequently the FDA put in place a process to determine what qualified as a biomarker from a regulatory point of view. This looks quite different and they distinguish between ‘biomarkers’ and ‘surrogate biomarkers’ defining a biomarker as a laboratory measurement that reflects the activity of a disease process and correlates with disease progression, and a surrogate biomarker as ‘a laboratory measurement or physical sign that is used in therapeutic trials as a substitute for a clinically meaningful endpoint that is a direct measure of how a patient feels, functions, or survives and is expected to predict the effect of the therapy’. Thus surrogate markers become very important for clinical trials and the FDA has authority to approve a drug that “has an effect on a clinical endpoint or on a surrogate endpoint that is reasonably likely to predict clinical benefit.”

The necessary and sufficient conditions of a biomarker

The devil is always in the details though. What specific conditions does a biomarker have to satisfy and with what parameters of quantitative stringency?

The ideal biomarker would be

– Perfectly correlated with the clinical endpoint

– Have little to no variability under normal circumstance

– Have very good signal to noise ratio

– Change quickly and reliably in response to changes in the clinical endpoint

As such, this ideal state is impossible to find in a complex system such as the human body. But what level of quantitative certainty is required? This again is subjective. It depends on whether it can outperform the alternatives.

Biomarkers of the brain

The few biomarkers relating to the brain tend to be structural in nature. For example, the use of MRI to evaluate tumors or progression of Multiple Sclerosis have been around for a while. In 2018, the FDA approved the use of a ubiquitin-C-terminal-hydrolase-L1 (UCH-L1) and glial fibrillary acidic protein (GFAP) assay, for determining the clinical necessity of obtaining a CT scan in patients with mild Traumatic Brain Injury (TBI). Here the results published in Lancet Neurology are pretty scant [1]. The study, conducted by Abbott, involved 450 people and the main readout they report is based on an ROC curve relating plasma concentrations of the molecules to a positive or negative CT and MRI scan. Here they found an area under the curve (AUC) of 0.77.

The only neuroimaging biomarker the FDA has approved has been the theta-beta ratio measured at the Cz electrode for diagnosis of ADHD in children (in 2013). However, this measure did not qualify as a standard biomarker but rather was to be used only in combination with clinical evaluation. Subsequently the Subcommittee of the American Academy of Neurology concluded that “It is unknown whether a combination of standard clinical examination and EEG theta/beta power ratio increases diagnostic certainty of ADHD compared with clinical examination alone”.

Neuroimaging Biomarkers of Behavior

This brings us to the enormous challenge of identifying biomarkers of behavior in the brain with neuroimaging. Here are the many issues:

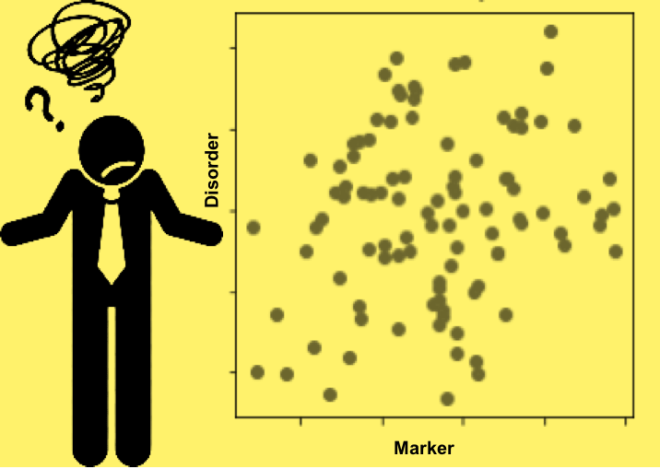

1) Behavioral ‘disorders’ are ambiguous to begin with and make for a very noisy clinical endpoint. What one means by a positive diagnosis for ADHD, Depression or Autism and any other such psychiatric disorder depends on the screening tool used. Besides, even within the simplest screening tool like the HAM-D for depression there are over a 100 combinations of symptoms that can give you the same diagnosis. So the ‘clinical end point’ of behavioral disorders is itself too noisy.

2) Neurological disorders too suffer some of the same ambiguities. For example, consider the problem of seizure detection. It is made extremely hard by the huge heterogeneity of seizures themselves.

3) Brain physiology varies enormously among individuals as do many features of perception, cognition and behavior. You can learn about some of these dimensions from the talks at our symposium on inter- and intra- person variability which are posted online here. In our own work we have found that if you take a large enough sample of people across a large enough cross section of life experience you can find several fold differences in several metrics. What this means is that an increase for someone is ‘normal’ for someone else and vice versa making absolute determinations very difficult.

4) Brain physiology varies enormously within individuals, across the lifespan and even moment to moment. Again, as a case in point see some of our talks at our symposium on inter- and intra- person variability on these aspects and some recently reported challenges in fMRI for example.

5) Most metric changes explored so far are not unique to the particular clinical endpoint, for example, changes in various spectral characteristics reported for one psychiatric disorder are also reported for many other psychiatric disorders thereby suggesting that they *may* be necessary but are clearly not sufficient [2].

6) And finally, Neuroimaging methods and preprocessing have substantial variability – particularly EEG where the number of manufacturers leads to enormous number of measurement constellations and preprocessing algorithms which can make the measurement difficult to reproduce thereby limiting its utility.

The challenge therefore is to identify more specific measures that are more personalized to the physiological context and outcome of the individual. No small task.

[1] Yue JK, Yuh EL, Korley FK, et al. Lancet Neurol 2019;

[2] Newson JJ, Hunter D and Thiagarajan TC Frontiers in Psychiatry Feb 2020

It is often forgotten that there are two “types” of body temperature, body temperature taken during the day such as in a physician’s office, and basal body temperature (BMT) taken orally immediately upon waking. BMT carries much information, often predictive information, while daytime temperature is useful only when determining whether or not a fever exists.

EEG data observed when the patient is considered brain-dead is most intriguing. It suggests something incorporeal is occurring.

need answers

cant go back to reality