Often artifact removal means throwing out a lot of valuable signal. Is it worthwhile to throw the baby out with the bathwater?

The EEG signal is sensitive to movement of facial muscles such as eyeblink, furrowing the brow and a host of other movements of the head. The eyeblink is a particularly stereotypical movement artifact and lends itself better to more specific extraction see Getting rid of eye blink in the EEG). However, in the eyes closed paradigm, eyeblink does not dominate but there can be other movement artifacts of a broad variety. Consequently, most studies employ some form of artifact removal. Many folks use some subjective judgement of ‘when you see a high amplitude event’, mark it as an artifact. Alternately there are ICA based methods and other automated feature extraction methods.

This post is not about a specific method but is meant to illustrate a different challenge of removing less stereotypical movement artifacts. Namely, that as the accuracy of removing artifacts increases, so too does the removal of real signal. This can be both a consequence of how good your algorithm but also that fact that along with every artifact there are also signal characteristics mixed in that will influence what you are lobbing off. As a result, the remaining signal ends up being artifact free but also substantially biased by the removal of sections of true signal of a particular characteristic which can completely change the result.

A feature extraction example

This particular example utilizes 20 recordings where careful visual observation has been used to mark out periods of recognizable movements including eye blinks, head movement and other facial movements during an eyes closed paradigm where eyeblink is not the main concern. A machine learning approach using numerous features of the EEG was then employed to identify these marked periods. There are two parameters here – 1) the threshold used to determine whether a period contained an artifact and 2) the signal length on which the algorithm was trained.

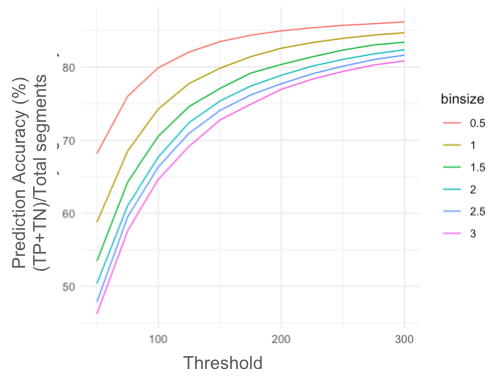

The first figure shows the accuracy – the number of correct predictions of ‘artifact’ and ‘clean’ segments. Given that the algorithm is trying to identify artifacts, a correct identification of an artifact is a true positive, a correct identification of a clean segment is a true negative. The accuracy is (TP+TN)/Total Segments. Here you can see that at the smallest bin size (red line), and threshold of 200, you can hit an accuracy of 90%. Seems like it’s pretty good and it might seem like a no brainer to just go ahead with those parameters and process the data. However, when you look deeper the problems emerge.

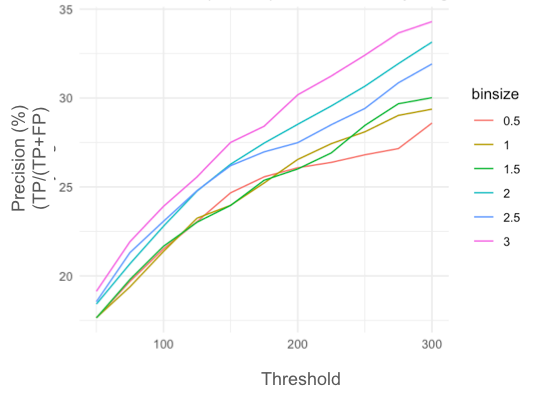

What got lobbed off? When you look at how many of the segments that got lobbed off were actually artifacts (the precision or TP/(TP+FP)), it goes the other way. In order to get high accuracy you have to get rid of more and more of the good signal.

What got lobbed off? When you look at how many of the segments that got lobbed off were actually artifacts (the precision or TP/(TP+FP)), it goes the other way. In order to get high accuracy you have to get rid of more and more of the good signal.

This is because real signal is not that different from most artifacts and therefore not so easily separated – unless it is specifically focused on eyeblink in which case results have been shown to be better. However, this is rarely the case in most recordings and movements are of all sorts. Particularly, all large amplitude events are not artifacts.

What should you do then?

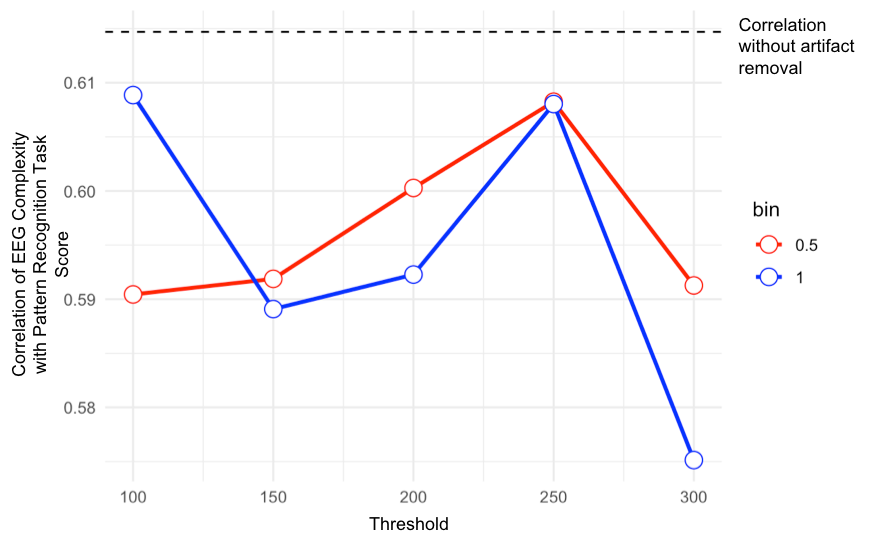

It may be tempting to just go with whatever removes the maximum of an artifact even if it takes off most of the signal with it. However, what you are left with is then no longer representative of the real signal since certain features of the signal are now gone. In fact, we have found numerous situations where the more stringent the artifact removal the more the relationships with cognitive outcomes measured start to degrade. The example below shows the correlation of EEG complexity in the eyes closed paradigm to performance on a pattern recognition task done immediately after the recording. In general while attempted artifact removal may have had some systematic effect of improving correlations in some range, in all cases correlations were worse than the raw signal alone.

While some may argue that the correlations with external factors may therefore be caused by artifacts, there is strong justification for the opposite – that the lobbing off of real signal is degrading the ability to effectively use the signal to determine relationships to brain outcomes. Thus assessing the impact on the relationship you are exploring is an important information point. It would be odd if it was specifically movement artifact that was contributing disproportionately to the relationship. One way to cross check is to see if the lobbed off part of the signal has a good or better correlation with the outcome of interest. It probably won’t.

It may be better therefore to just leave in the artifacts to preserve the signal.

This post was written with analysis provided by Dhanya Parameshwaran

I agree that we should always be careful about what we remove from the data.

However, I my view, it is better to to remove artefacts than to deal with contamnated data. There are published articles that sold muscle activity as cortical gamma.

There are very good tools to eliminate artefact confounds from the data. I would aleays recommend ICA. It can be difficult for event-free or resting data as in your example but the removal of artefacts is quite straightforward for event-related data.

– check the ICA topographies

– check the ICA time courses

– epoch ICA time courses and compute/evaluate the evoked potential of the component

– for oscilatory activity, I always check the time-frequency decomposed and z-transformed single trials

– for oscillatory activity, compute the induced activity for a component across all trials

With these steps you can be almost entirely sure about what needs to be retained and what needs to be removed.

As a general thought, your considerations would require that you would have any idea about the “true” underlying cortical signal. This is not the case. It would be interesting to know, how you have removed the artefacts in your example.

Best wishes, Enrico

Hello Enrico,

I completely agree with you in using ICA for artifact correction, Would you please like to elaborate o your third point? It is not clear to me. Are you talking about epoching each time course separately? If yes, then how to do it. I’m very curious to know. You can mail me protiknath.physio@gmail.com .

Regards, Pratik

ICA works well for more stereotypical artifacts like the eyeblink in high density recordings (32 channels or more). For more scalable brain health or consumer applications where 4 to 16 channels are more common ICA is not a good choice. Furthermore, even in high density recordings, when the movement artifacts are less stereotypical they are not easily distinguishable from signal ascribed to frontal origin in the ICA and removing a component as an artifact becomes a subjective decision. We have some further articles on ICA here: https://sapienlabs.org/source-localization-in-the-eeg/ and

https://sapienlabs.org/the-inverse-problem-in-eeg/

Currently, the area of pre processing, or EEG data cleaning and artifact removal is a research topic yet. In the case of artifacts ICA an all its flavours can do the job. However, new and enhanced automatical methods are arrival to improve and facilitates the data processing, such AMICA, ICALABEL and ASR (plugins for EEGLAB). Each method have mathematical assumptions that can not be enough sometimes, for instance, in TMS where the big artifacts are not statistical independent of the cortical signal. A common trade off between visual inspection, manual processing and automatic cleanining based on metrics or machine learning has to be achieve (very common in machine learning applications too). I prefer to pick a mixed approach where we can loss some data but the main artifacts are rejected, depending of the study or application. ERP and RS-EEG need different approaches and there is not a common automatic swiss tool for all signals.