A concept is a general notion or construct of something that doesn’t necessarily need all the pieces exactly and depends on context. How does the brain produce ‘concepts’?

Having a good concept of something is a great thing. If one has a good concept of a car, one can easily deal with cars: One can use cars, detect cars, even fix cars, invent new cars and create art about cars. Also, one can combine cars with other things—with other concepts–to create new items. For example, one can combine a car with a trailer, to obtain a useful product that none of the parts can offer on its own.

This would all be impossible without concepts. Arguably, concepts are the most important ingredient for making our human minds.

Understanding Concepts

Those who have been following my earlier posts may remember that when we discussed perception, we discussed how critical flexible juggling of ideas was for perceiving the world effectively. I recommend reading the following post for an understanding where the present theories fail when trying to explain human ability to switch between different kinds of percepts.

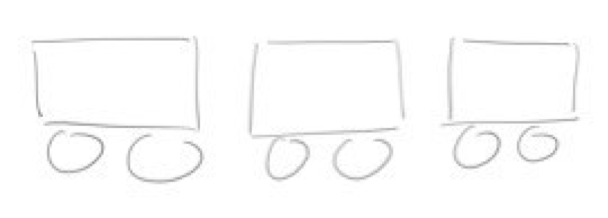

Is this a car, or is it a table with two chairs? The fact is that it could be either. We can switch our perception at our will. What lies behind those percepts are concepts. What makes us see the same sensory input differently is that we activate for each percept a different concept.

Is this a car, or is it a table with two chairs? The fact is that it could be either. We can switch our perception at our will. What lies behind those percepts are concepts. What makes us see the same sensory input differently is that we activate for each percept a different concept.

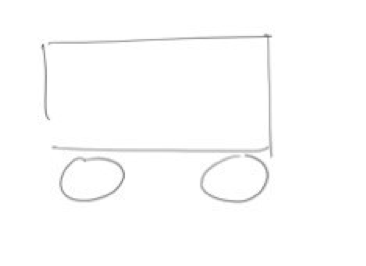

One property to note is that concepts depend on the context or the happenings of the recent past. Whenever I set the context such that the picture above is seen as a car, then this next picture is easily perceived as depicting a train:

But then, if I change the context and make the first picture be seen as a table with chairs, then the latter image naturally becomes a classroom. Context determines percepts. Context pre-activates a certain set of concepts and then the currently active concepts steer the activation of new concepts. That way, it is not only the sensory inputs that determine which concepts will be activated; the context can be at least as powerful.

We humans need such contextual sequences. Our concepts need serial orders of related activations. This is why we like stories and we don’t like sequences of random facts. Stories are preferred because they activate and build concepts on top of each other. This is the only way to tell a complex story and this is how a new event in a story becomes meaningful—it is meaningful only within the context of all the previous events. A meaningful, semantically consistent chain of events is the best food for thought that our minds can get.

Our concepts are born out of context. The nature of the world in which we live is highly contextual. If one is driving a car at one second, it most likely means that they will continue driving a car for a while. One will not suddenly jump to painting with oil on canvas for a few seconds, and then equally suddenly move to three-second long fishing fun on a boat, only to as abruptly switch to composing a new sentence for a letter one is writing to friend, to be again interrupted before the end of that sentence to go back to the fishing fun, or car driving, or whatever.

How does Neuroscience Explain This?

It does not. Today, no theory can explain how this switch of concepts is done. Surely, if we don’t have a good account of how concepts for perceiving a car (or a grandmother) are implemented, we also do not have an explanation of how the concept machinery works in other cognitive operations: working memory, attention, consciousness, and so on.

Connectionism, which already suffers from a crisis for multiple other reasons, is also suffering from the inability to explain concepts. As we discussed in the this post, the best that connectionism can offer is a neuron with an elaborate receptive field sitting on top of a feature-extraction hierarchy. These explanations don’t do much good for the complex mental tasks that humans regularly perform. This is a classical approach with simple neurons that only sum up inputs and are connected into an elaborate network of synaptic connections. This is not enough because such structures can only interpolate from the images of the training examples. There is no possibility to extrapolate from images of real cars to a drawing of a car shown in the illustrations above.

Connectionism would not know what to do with stories. At best, one could build a specialized model for sequences but, as explained, this model would then contradict all the other connectionist models explaining other phenomena in the brain. I named this issue a superposition problem. Fundamentally however, connectionist models don’t care about such switches. They can switch context without noticing any problems.

Artificial Intelligence Ignores Context

Similarly, our artificial intelligence (AI) technology—which is profoundly influenced by connectionism—also does not mind switching. Cortana, Alexa, and Siri don’t’ get confused, frustrated or tired if you ask them random unrelated questions. These assistants will do equally well with and without a story. In fact, deep learning models—the technology behind those AI assistants—even require that their training data sets are maximally randomized; any deviation from full randomness i.e., any meaningful grouping of the training materials, impairs their ability to learn. This if fundamentally different from how humans learn.

We can see that as a no-free-lunch tradeoff. The price connectionism and the AI of today pay for being able to switch context easily, is that they will never be able to match human-like concepts. Only a learning world in which events are temporarily meaningfully connected can provide proper stimuli for developing concepts. An agent having lived in such world needs this connectedness to develop and use concepts that offer understanding of that world—much like we humans do in our human world.

But this does not mean that we can simply put a connectionism theory into a context-rich environment and expect a success. This will not work for the above mentioned reasons. What we need is a learning machinery that is naturally tuned to acquiring and applying concepts. One requirement for such a machinery is that it is able to consider the recent past. Sadly, connectionist models are easy on erasing the past. New inputs simply tend to overwrite the remnants of the preceding inputs. Again, if one tries to engineer a special connectionism solution, the superposition problem hits back immediately. As I discussed earlier, engineering is not how one builds a successful theory.

The question is then what can be done? Stay tuned for my ideas on how fast adaptations can solve this problem.

Reference

Nikolić, D. (2015). Practopoiesis: Or how life fosters a mind. Journal of Theoretical Biology, 373, 40-61.

This is a part on the blog series on mind and brain problems by Danko Nikolić. To see the entire series, click here.